Research Program

The study of collective emergent phenomena through the development of experimentally validated multiscale computational models provides significant opportunities for transformative materials research.

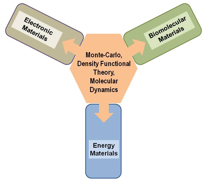

A major research focus of the Alliance will be to develop and experimentally validate common computational tools essential for three Science Driver (SD) areas of current strength in the State, and of great technological and economic importance: (1) electronic materials, (2) energy materials, and (3) biomolecular materials. The Alliance has roughly equal numbers of computational scientists/theorists and experimentalists and the proposed research budget is allocated accordingly. The research activities of the Alliance will be organized through three SD teams and one computational team. Alliance computational scientists will be members of at least one SD team as well as the computational team. The SD teams will develop new formalisms and methods for tackling multiple length and time scales and multiscale interactions and correlations. The computational team will include experts who will help translate these formalisms and methods into algorithms and codes that take advantage of current and anticipated high-performance computing platforms. The commonality of the tools will be a major factor that tightly integrates the three Science Drivers. The SD teams will use these tools for generating testable predictions for systems of great technological and scientific interest. These predictions will be validated by experimentalists on the SD teams, making use of existing materials research facilities in the State. A feedback loop will develop between the experimentalists and the other team members to refine the formalisms, while also enhancing the collaborations between experimentalists, theorists, and computational scientists. Students and postdocs will work in a multidisciplinary team environment, providing a unique experience that enhances the training and educational impact of this project. The overlapping memberships of teams will ensure that advances in one area are rapidly communicated to other areas. Ultimately, the experimentally validated computational approaches will position LA-SiGMA and the State to advance simulation-guided materials science and position the State to compete effectively for a federally funded center of excellence in simulation-guided materials applications.

-

Common Computational Tools for Multiscale Simulations

-

The "glue" that holds the three SDs together (Fig. 1) are the formalisms, algorithms, and codes to be developed during the course of this project. Therefore, to achieve the goals of this RII project, we have assembled a cybertools and cyberinfrastructure (CTCI) group of 27 participants, led by Jha (LSU), that will allow Alliance members to more efficiently utilize the next generation of 21st-century supercomputers, including Blue Waters. The CTCI group will have four focus areas: (i) Novel Architectures [Ramanujan (LSU)], (ii) Execution Management Tools and Environment [Allen (LSU)], (iii) Visualization [Jana (SU)] and, (iv) Distributed Data Management and Provisioning [Kosar (LSU)]. The present state-of-the-art petaflop computers have tens of thousands of processors. Alliance members have extensive experience with these machines and have achieved efficient scaling to 10,000 processors. The next generation of hyperparallel, heterogeneous, and multicore machines will present additional challenges that can only be overcome with the shared experiences of the CTCI teams and applied mathematicians [Lipton and Bourdin (LSU), Dai (LA Tech)], and Li (SU) and experts in high-performance computing [Jha, Sterling, Ramanujam (LSU) and Leangsuksun (LA Tech)]. The CTCI group will build upon the current RII-Cybertools project to provide the end-to-end computational tools, environments and capabilities to enhance the utilization and productivity of high-performance and distributed CI. This will enable the Alliance computational capabilities to both Scale-Up and Scale-Out — a balance of which is required to effectively utilize both the next generation of high-end machines (e.g., Blue Waters), as well as prepare the Alliance for the next phase of distributed national CI — TeraGrid XD (mid-2011). The Alliance will target three formalisms/algorithms that will form a transformational "common" toolkit for the Alliance's computational scientists.

Fig. 1. Science Drivers are linked by common computational tools.Next Generation Monte Carlo Codes [Pratt (Tulane), Jarrell (LSU), Mobley (UNO)]. Monte Carlo (MC) simulations can bypass long time scales by directly calculating free energies associated with activated (long time) processes and by allowing dynamical properties to be studied without following the dynamics serially. MC methods are employed in studies of phase equilibria, nucleation, protein folding, and electronic structure and will be used in all three SD teams. They allow simulations to be split into independent processes representing, for instance, different realizations of quantum state behavior, different parameters (such as temperature), or simply by subdividing the MC Markov process. Therefore, many MC codes are inherently parallelizable. However, these codes will not be efficient on the next generation of multi-core, hyperparallel, or heterogeneous machines being developed as part of the 21st-century CI. Many of these problems can be resolved through the development of hybrid-parallel codes. The MC team will work closely with experts like Ramanujan (CTCI group) to develop a suite of next generation MC codes that will be used by all the SD teams.

Massively Parallel Density Functional Theory and Force Field Methods [Perdew (Tulane), Wick (LA Tech), Bagayoko (SU)]. Density Functional Theory (DFT) with Generalized Gradient Approximation (GGA) has allowed computational chemistry to become an indispensable tool in all branches of molecular sciences. One of the most successful and systematic approaches to developing density functionals with broad applicability was pioneered by Perdew at Tulane, who will lead the development of more accurate GGA functionals. The most recent functional from the Perdew group, the revTPSS-2009, holds great promise as the starting point for a fully nonlocal functional needed to describe correlated systems such as transition metal oxides. Such a functional will find immediate application in two SDs, namely, electronic and energy materials. We will also examine the M06 family of functionals from the Truhlar group that can describe long-range and non-bonded interactions that are important in biomaterials. The force field team led by Wick will design reactive and transferable (to different state points, mixtures, and interfaces) force fields for improved predictive ability. The computational team will help implement the new DFT functionals and force fields on multicore and heterogeneous platforms to allow SD teams to perform large-scale computations. These high-performance codes will be central to advancing all three SDs. The execution management team (Allen) of the CTCI group will work closely with Perdew, Bagayoko and Wick, to enable complex workflows, ensemble runs, and multiple-stage calculations to exploit the full potential of novel machines like Blue Waters.

Large-scale Molecular Dynamics [Ashbaugh (Tulane), Jha (LSU), Rick (UNO)]. While MC simulations can study the statistical properties of long time scale processes, simulating the dynamics at the molecular level requires Molecular Dynamics (MD) methods. Following the dynamics of multiple length scales (molecular to mesoscopic) demands a sophisticated and consistent treatment of the different length scales. Therefore, reliable MD simulations are critical for multiscale materials simulations. NAMD, Gromacs, and LAMMPS (http://lammps.sandia.gov/), are open source classical MD codes used for modeling atomic, polymeric, biological, metallic, granular, and coarse-grained systems. New algorithms and codes based on a variational approach and hybrid MD/continuum methodologies will be developed and added as modules to the LAMMPS package. We will work in partnership with Sandia National Laboratories, the distributor for LAMMPS, which is designed for parallel computers that support C++ compilers and MPI message-passing library. The CTCI team will work with the SD teams to port LAMMPS to the next generation supercomputers in which MPI may not be the optimum solution. They will also lead the efforts to incorporate new or existing (such as ReaxFF) force fields into the LAMMPS program. The visualization team (Jana) of the CTCI group will work with the MD team to develop the space-time multiresolution visualization capabilities as well as integrate them within existing immersive and interactive environments.

-

-

Science Drivers (SDs)

-

SD1: Electronic and Magnetic Materials [M. Jarrell (LSU), J. Perdew (Tulane)]. Many electronic and magnetic materials are characterized by strong correlations. They are the paradigm for complex emergent phenomena involving the many length scales barrier, as these materials exhibit long-ranged order (on the scale of the sample size) that emerges from atomic spin, orbital, and charge degrees of freedom (on the scale of 10-10 cm). The current state of the art uses spatially local approximations like Local Density Approximation (LDA) and the Dynamical Mean Field Approximation (DMFA). The goal of this SD is to transform the field by extending these methods to much larger length scales. The development of multiscale methods for strongly correlated electronic and magnetic systems is novel, and will involve a team of 26 faculty that includes experts in relevant computational and DFT methods and an experimental team that includes experts in a wide variety of measurement techniques. The payoff of this collaboration will be the ability to accurately model strongly correlated materials on national leadership supercomputers for the first time.

Strongly correlated materials have many promising applications. The 2007 International Technology Roadmap for Semiconductors stresses that highly correlated electron systems can enable new devices by greatly enhancing their sensitivity to different applied fields. Organic magnets are highly tunable systems that will lead to a better understanding, which will help with the design of higher-performance magnets, e.g., with higher blocking temperatures. Several studies have been performed on molecular magnets as potential labeling and imaging agents for biology and medicine. Organic semiconductors are tunable, flexible, easier and cheaper to fabricate, and have long spin coherence lifetimes. In each case, it is the complexity of these materials that makes them promising, i.e., the ability to use the competing spin, charge, or orbital ordering to gain sensitivity to various fields, or the use of both the spin and charge degrees of freedom in spintronic organic semiconducting devices.

Strong correlations can lead to emergent phenomena, including spin, charge, and orbital ordering. The complexity is further enhanced by a competition between states that is displayed by many strongly correlated materials, including heavy Fermion materials, transition metal oxides, high-temperature superconductors, spintronic materials, manganites, and organic magnets and semiconductors. These technologically promising materials are poorly understood due to long-ranged spin and charge correlations, competing ground states, and their complex phase diagrams. These competing states have both local (e.g., commensurate magnetism) and highly nonlocal (e.g., charge ordering) order parameters. Multiscale approaches are essential to treat both the development of long-range order and the competition between these states.

SD2: Materials for Energy Storage and Generation [L. Pratt (Tulane), C. Wick (LA Tech)]. Efficient and clean generation and use of energy are major challenges facing the nation and the world. Alliance members will study electrochemical cells and capacitors that store and deliver electrical energy, advanced materials for the storage and release of hydrogen, and catalytic reactions that generate hydrogen gas. These inquiries are tightly bound by the common barrier of multiple scales. For example, significant energy storage involves the short length scales of molecular chemistry, efficient delivery involves energy and material transport over longer length scales, and the microstructure provides material design variables. The essential length scales range from 10-10 m to 10-3 m, and essential time scales range from 10-12 s to 103 s. In addition to the wide range of length and time scales, other barriers include accurate treatment of intermolecular forces using either Quantum Mechanics (QM) or force fields. Quantum simulation is presently limited to time scales of ~10-11 s, while existing force fields, including coarse-grained versions, are not well tested for the energy applications of interest herein and are limited to time scales much less than one second. The goal of this SD is to develop and apply novel DFT formulations, reactive and transferable force fields, and high-performance MC/MD methods to address these common challenges. There will be significant overlap in membership between the SD2 team and the CTCI group, as all projects involve DFT, force fields, MC/MD, visualization, and data management. We will combine these methods to treat all relevant scales. A team of 21 faculty with expertise in multiscale simulation methods, DFT, MC methods, and continuum models will perform simulations that will be validated and guided by SD2 experimentalists. The payoff from this work will be experimentally validated multiscale models that can reliably guide the development of practical solutions to important energy-related problems which, in conjunction with the CTCI group, will utilize dynamic-execution models to reduce the time-to-solution on multiple different architectures (Jha, Allen).

SD3: Biomolecular Materials [Ashbaugh (Tulane), Moldovan (LSU)]. Living organisms are composed of the most complex, hierarchically-organized materials known. Proteins, for example, are built from just 20 amino acids and, depending on their sequence, carry out diverse functions including catalysis, signaling, and structural support. The goal of this SD is to develop novel biomolecular material systems for the encapsulation, delivery, and release of therapeutics to targeted tissues. The barriers to achieving these goals are modeling length scales on the order of 10-9 to 10-7 m and the lack of sufficiently efficient force fields to enable simulations to reach time scales of 10-6 to 10-3 s required for meaningful predictions. At present, all-atom simulations are limited to length scales less than 10-8 m and time scales up to 10-7 s. Coarse-grained (CG) methods allow simulations that reach 10-5 s. A team of 14 faculty members with expertise in multiscale MD, all-atom and CG force fields, and experiment will develop, apply, and validate multiscale methods applicable to biomolecular materials. The payoff will be experimentally validated multiscale models that enable the design of novel drug delivery vehicles.

While many drug candidates demonstrate desired therapeutic effects, many also exhibit undesirable solubility, toxicity, or stability in vivo. Thus, there is exploding interest in carrier-based delivery methods that combine desired therapeutic effects with specific delivery sites. Two successful approaches include the noncovalent encapsulation of drugs inside self-assembled carriers, such as micelles or liposomes, and the covalent attachment of drugs to polymer scaffolds. Self-assembled carriers offer simple, low cost preparation, but are susceptible to disaggregation in vivo. The covalent connectivity of polymer scaffolds affords heartier transport, but requires potentially cost-prohibitive synthesis and drug-attachment/release chemistry. These methodologies are successful, as evidenced by the recent FDA approval of liposomal formulations such as DOXIL for the treatment of Kaposi's sarcoma, and numerous polymer drug candidates in clinical trials, such as OPAXIO for recurring ovarian cancer. Alternately, uni-molecular polymeric micelles combine the advantages of single polymer carriers with noncovalent encapsulation, and are expected to expand potential targets. No single delivery strategy will be a panacea for all potential drugs and targets, requiring a multitiered approach to their design.

Molecular and CG simulations will winnow the range of design variables of biodelivery vehicles and circumvent time consuming synthetic and characterization bottlenecks. The Alliance will focus on self-assembled and unimolecular delivery vehicles, covering the range of practical molecular architectures. The modeling of delivery vehicles is inherently multiscale, from molecular-scale bio-specific hydrogen bonding and hydrophobic interactions to nanometer-scale conformational and aggregation state changes. To overcome the barriers to these computational objectives, advances in model development must be made. Our coordinated efforts will aim to (1) Develop new interatomic interaction potentials using DFT methods developed by Perdew (Tulane) and expand existing force fields (e.g., CHARMM, AMBER, GROMOS, OPLS, etc.) to systems containing biological and nonbiological molecules; (2) Develop new CG and accelerated simulation strategies linking length and time scales inherent in biological systems. Wick, Chen, and Moldovan (LSU), and Ashbaugh (Tulane) will develop CG models (e.g., expanding the MARTINI force field) that preserve structure and thermodynamics between scales. Such techniques have already been developed for modeling homopolymers up to 105 g/mol in weight, and will be expanded to heterogeneous biomaterials. In addition, Wick, Chen, Hall, Pratt (Tulane), Rick (UNO), and Ashbaugh will develop advanced hybrid molecular/continuum (HMC) methods to model nonequilibrium transport barriers to delivery. CG models and HMC algorithms will be ported into LAMMPS for broad dissemination; and (3) Develop advanced free energy evaluation strategies across heterogeneous computer networks to calculate differences between macromolecular conformational changes and drug solubilization. Mobley (UNO) will develop these techniques that can be used for both molecular and CG models to reliably translate results across length scales. Specific focus directions are discussed below. The CTCI visualization group will provide expertise in computing spinors for molecules and other advanced visual analytical techniques. The data management and execution management groups will work closely with SD3 members to reduce the time-to-solution.

-

Collapse All

Expand All